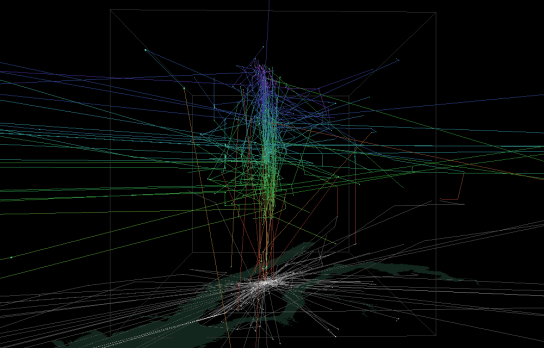

This post continues my quest of exploring the spatial dimension of Twitter streams. I wanted to try one of the classic spatio-temporal visualization methods: Space-time cubes where the vertical axis represents time while the other two map space. Like the two previous examples, this visualization is written in pyprocessing, a Python port of the popular processing environment.

This space-time cube shows twitter trajectories that contain at least one tweet in New York Times Square. The 24-hour day starts at the bottom of the cube and continues to the top. Trajectories are colored based on the time stamp of their start tweet.

Additionally, all trajectories are also drawn in context of the coastline (data: OpenStreetMap) on the bottom of the cube.

While there doesn’t seem to be much going on in the early morning hours, we can see quite a busy coming and going during the afternoon and evening. From the bunch of vertical lines over Times Square, we can also assume that some of our tweet authors spent a considerable time at and near Times Square.

I’ve also created an animated version. Again, I recommend to watch it in HD.